Recognition: unknown

Seeing the Whole Elephant: A Benchmark for Failure Attribution in LLM-based Multi-Agent Systems

Pith reviewed 2026-05-08 08:59 UTC · model grok-4.3

The pith

Full execution traces improve failure attribution accuracy by up to 76% over partial outputs in LLM-based multi-agent systems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

TraceElephant is a benchmark for failure attribution in LLM-based multi-agent systems that supplies full execution traces and reproducible environments. Evaluation across attribution techniques and configurations shows full traces raise accuracy by up to 76 percent over partial-observation baselines, because omitted inputs and contexts frequently conceal the decisive failure causes. The benchmark therefore aligns evaluation with the complete information developers actually use during debugging.

What carries the argument

TraceElephant benchmark, which supplies complete execution traces including all agent inputs, outputs, and interaction contexts for controlled, reproducible failure scenarios.

If this is right

- Attribution techniques identify the responsible agent and decisive step more reliably when supplied with complete traces rather than outputs alone.

- Benchmarks and evaluations of new attribution methods should adopt full observability to match practical debugging conditions.

- The benchmark provides a shared foundation for developing techniques that make multi-agent systems more transparent by exposing hidden failure causes.

- Follow-up work can systematically compare attribution approaches under the full-trace setting to measure progress toward reliable diagnosis.

Where Pith is reading between the lines

- Designers of multi-agent frameworks may add comprehensive logging as a default to enable better post-failure analysis.

- The emphasis on full context could link failure attribution research to wider efforts in making agent decisions explainable and accountable.

- Applying TraceElephant-style evaluations to open-source agent platforms would test whether the accuracy gains hold outside the paper's controlled scenarios.

- Automated tools could eventually use full traces to detect and flag potential failures before they complete.

Load-bearing premise

The failure cases and environments in the benchmark are representative of the failure modes developers actually encounter in real LLM-based multi-agent deployments.

What would settle it

Re-evaluating the same attribution techniques on a fresh set of failures drawn from production LLM multi-agent systems and finding no meaningful accuracy gain from full traces over partial ones would undermine the reported performance difference.

Figures

read the original abstract

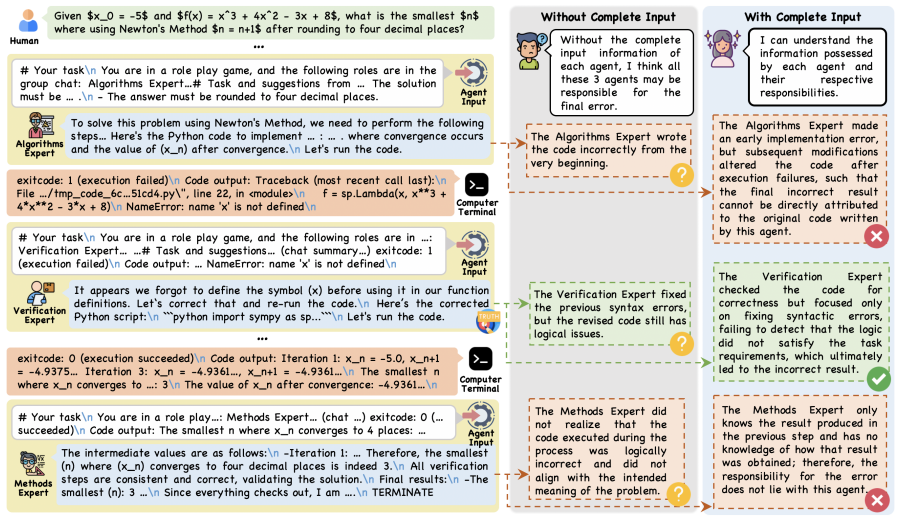

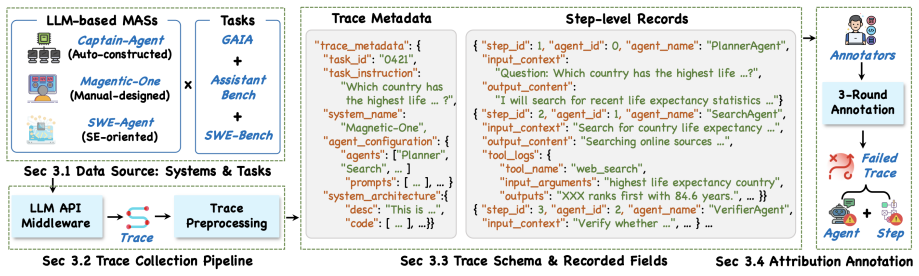

Failure attribution, i.e., identifying the responsible agent and decisive step of a failure, is particularly challenging in LLM-based multi-agent systems (MAS) due to their natural-language reasoning, nondeterministic outputs, and intricate interaction dynamics. A reliable benchmark is therefore essential to guide and evaluate attribution techniques. Yet existing benchmarks rely on partially observable traces that capture only agent outputs, omitting the inputs and context that developers actually use when debugging. We argue that failure attribution should be studied under full execution observability, aligning with real-world developer-facing scenarios where complete traces, rather than only outputs, are accessible for diagnosis. To this end, we introduce TraceElephant, a benchmark designed for failure attribution with full execution traces and reproducible environments. We then systematically evaluate failure attribution techniques across various configurations. Specifically, full traces improve attribution accuracy by up to 76\% over a partial-observation counterpart, confirming that missing inputs obscure many failure causes. TraceElephant provides a foundation for follow-up failure attribution research, promoting evaluation practices that reflect real-world debugging and supporting the development of more transparent MASs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

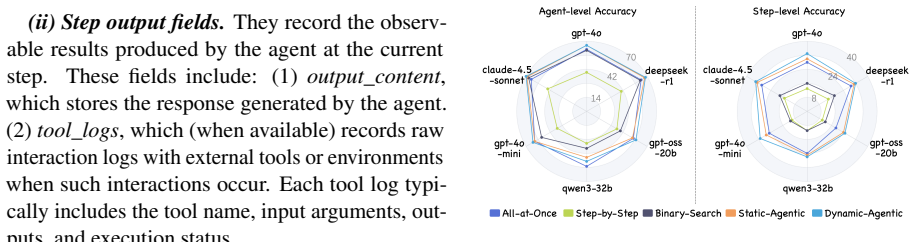

Summary. The paper introduces TraceElephant, a benchmark for failure attribution in LLM-based multi-agent systems that supplies full execution traces (including inputs and context) rather than the partial traces (agent outputs only) used in prior work. It evaluates multiple attribution techniques and reports that full traces yield up to 76% higher attribution accuracy than partial-observation baselines, arguing that this setup better matches real-world developer debugging and that missing context obscures many failure causes.

Significance. If the quantitative result and benchmark construction hold, the work is significant because it supplies a reproducible, full-observability testbed for a practically important problem in LLM-MAS. The explicit comparison of full versus partial traces provides concrete evidence that context matters for attribution, which could steer future method development toward more transparent systems. The provision of reproducible environments is a clear strength that supports follow-up research.

major comments (2)

- [§4 (Evaluation) and Table 2] §4 (Evaluation) and Table 2: the headline claim of 'up to 76% improvement' in attribution accuracy is load-bearing for the paper's central thesis, yet the manuscript provides insufficient detail on the precise definition of the accuracy metric, how ground-truth attributions were established by human or automated judges, and whether failure cases were selected before or after observing the full traces. Without these specifics the numerical delta cannot be fully interpreted or reproduced.

- [§3 (Benchmark Construction)] §3 (Benchmark Construction): the broader claim that 'missing inputs obscure many failure causes' in practice rests on the assumption that TraceElephant's environments and injected failures are representative of real LLM-MAS deployments. The failure-injection process and environment selection are described as synthetic and reproducible, but the paper does not present evidence (e.g., comparison to logged production traces or diversity metrics) that the chosen failure modes reflect the nondeterministic, multi-turn interactions developers actually encounter.

minor comments (2)

- [Abstract] Abstract: the phrase 'various configurations' is used without enumeration; listing the main axes (e.g., agent count, trace length, attribution method) would improve immediate readability.

- [§2 (Related Work)] §2 (Related Work): the discussion of prior MAS benchmarks could more explicitly contrast their partial-observability design with TraceElephant's full-trace approach, perhaps in a small comparison table.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and will revise the manuscript to improve clarity and provide additional supporting details.

read point-by-point responses

-

Referee: [§4 (Evaluation) and Table 2] §4 (Evaluation) and Table 2: the headline claim of 'up to 76% improvement' in attribution accuracy is load-bearing for the paper's central thesis, yet the manuscript provides insufficient detail on the precise definition of the accuracy metric, how ground-truth attributions were established by human or automated judges, and whether failure cases were selected before or after observing the full traces. Without these specifics the numerical delta cannot be fully interpreted or reproduced.

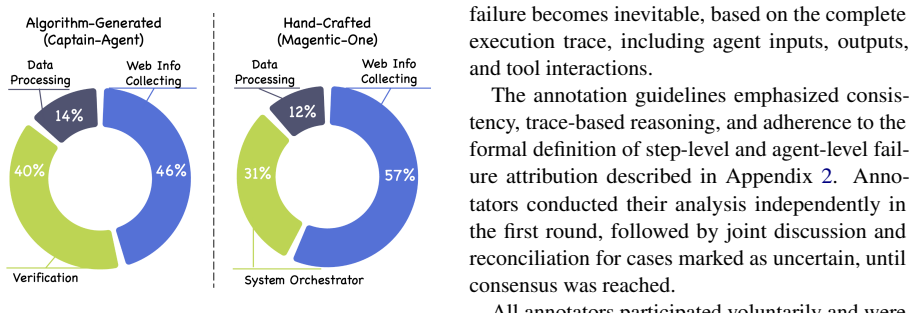

Authors: We agree that the current description of the evaluation protocol lacks sufficient explicit detail for full reproducibility and interpretation. Accuracy is defined as the fraction of test cases in which the attributed agent-step pair exactly matches the ground-truth failure location. Ground-truth labels were produced by two independent human experts who inspected the complete execution traces (including all inputs, outputs, and context); disagreements were resolved through discussion, yielding an inter-annotator agreement of 0.87 Cohen's kappa. Failure cases were chosen according to a pre-defined taxonomy of error types before any traces were generated or inspected, ensuring no post-hoc selection bias. In the revised manuscript we will add a new subsection in §4 that formally defines the metric, describes the annotation protocol, reports agreement statistics, and includes a small illustrative example. This clarification will make the reported 76% improvement fully interpretable. revision: yes

-

Referee: [§3 (Benchmark Construction)] §3 (Benchmark Construction): the broader claim that 'missing inputs obscure many failure causes' in practice rests on the assumption that TraceElephant's environments and injected failures are representative of real LLM-MAS deployments. The failure-injection process and environment selection are described as synthetic and reproducible, but the paper does not present evidence (e.g., comparison to logged production traces or diversity metrics) that the chosen failure modes reflect the nondeterministic, multi-turn interactions developers actually encounter.

Authors: We acknowledge that stronger evidence of ecological validity would be desirable. However, direct comparison to proprietary production logs is infeasible for a public benchmark due to confidentiality constraints. Our failure modes were derived from a systematic review of failure categories reported across recent LLM-MAS literature (reasoning errors, inter-agent miscommunication, tool misuse, and context loss). In the revision we will augment §3 with (i) a table reporting benchmark diversity statistics (agent counts 3–12, average interaction length, distribution of failure types) and (ii) an explicit discussion of how the injected failures map to patterns described in prior work. We will also temper the claim language to emphasize that the benchmark demonstrates the value of full observability under controlled, reproducible conditions rather than claiming statistical equivalence to all production deployments. This partial revision addresses the concern while preserving the benchmark's intended purpose as a standardized testbed. revision: partial

Circularity Check

No circularity: empirical comparison on self-constructed benchmark does not reduce to inputs by construction

full rationale

The paper's central result is an empirical measurement: full traces yield up to 76% higher attribution accuracy than partial traces when both are evaluated on the TraceElephant failure cases. This is a direct head-to-head comparison inside the benchmark rather than a fitted parameter renamed as a prediction, a self-definition, or a load-bearing self-citation. No equations or uniqueness theorems are invoked that collapse back to the authors' prior inputs. The benchmark construction and failure injection are presented as design choices whose representativeness is an external-validity question, not a circularity issue. The derivation chain is therefore self-contained and non-circular.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Full execution traces (inputs, context, and outputs) are the information developers actually use when diagnosing MAS failures.

- domain assumption The benchmark environments produce failures whose causes can be unambiguously attributed given full traces.

Reference graph

Works this paper leans on

-

[1]

Who is introducing the failure? automatically attributing failures of multi-agent systems via spec- trum analysis.Preprint, arXiv:2509.13782. Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, and 1 others. 2025. DeepSeek- R1 incentivizes reasoning in llms through reinforce- ment learning...

-

[2]

Diego Maldonado, Edison Cruz, Jackeline Abad Torres, Patricio J Cruz, and Silvana del Pilar Gamboa Ben- itez

A survey on LLM-based multi-agent sys- tems: workflow, infrastructure, and challenges.Vici- nagearth, 1(1):9. Diego Maldonado, Edison Cruz, Jackeline Abad Torres, Patricio J Cruz, and Silvana del Pilar Gamboa Ben- itez. 2024. Multi-agent systems: A survey about its components, framework and workflow.IEEE Access, 12:80950–80975. Ghassan Misherghi and Zhend...

2024

-

[3]

Adaptive in-conversation team building for language model agents.arXiv preprint arXiv:2405.19425,

Adaptive in-conversation team building for language model agents.Preprint, arXiv:2405.19425. MiroMind AI Team. 2025. MiroFlow: A high- performance open-source research agent framework. https://github.com/MiroMindAI/MiroFlow. W Eric Wong, Ruizhi Gao, Yihao Li, Rui Abreu, and Franz Wotawa. 2016. A survey on software fault localization.IEEE Transactions on S...

-

[4]

Qwen3 technical report.arXiv preprint arXiv:2505.09388. John Yang, Carlos E. Jimenez, Alexander Wettig, Kil- ian Lieret, Shunyu Yao, Karthik Narasimhan, and Ofir Press. 2024. SWE-Agent: agent-computer in- terfaces enable automated software engineering. In Proceedings of the 38th International Conference on Neural Information Processing Systems, NIPS ’24, ...

work page internal anchor Pith review arXiv 2024

-

[5]

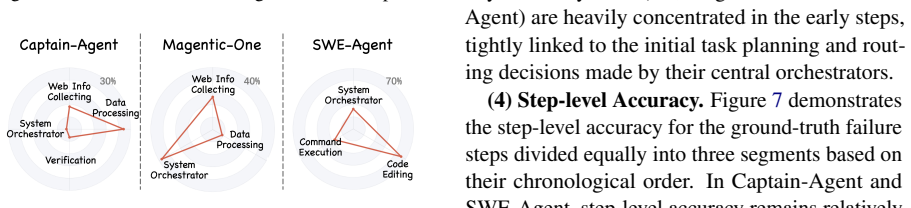

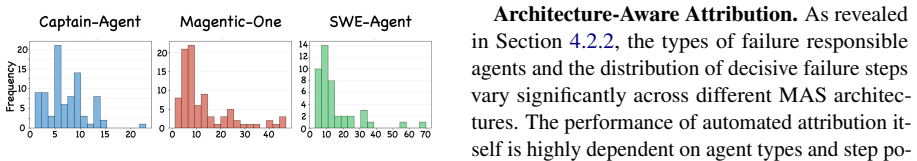

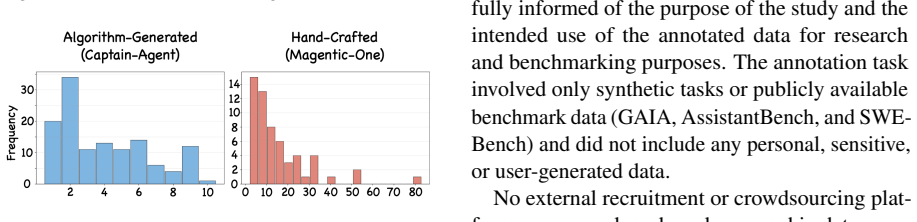

These trends align closely with those observed in TraceElephant (see Figure 4 and 6). The slight difference lies in the average num- ber of LLM invocations: in Who&When, Captain- Agent and Magentic-One respectively have an av- erage of 9.6 and 28.8 calls, while these figures for TraceElephant are 20.5 and 29.3. It is also worth noting that the step count ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.