Recognition: 3 theorem links

· Lean TheoremAOCI: Symbolic-Semantic Indexing for Practical Repository-Scale Code Understanding with LLMs

Pith reviewed 2026-05-08 17:59 UTC · model grok-4.3

The pith

AOCI provides LLMs a stable symbolic-semantic blueprint of large codebases for consistent, defect-free understanding.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

AOCI builds a repository representation from encoding rules followed by one entry per code unit; each entry joins a symbolic tag that supplies architectural coordinates to semantic content that records function, dependencies, and constraints, yielding a consistent, stable view the LLM consumes in one pass, with incremental regeneration of only changed entries to keep the blueprint aligned with the code.

What carries the argument

The AOCI index of encoding rules plus per-unit symbolic-semantic entries that together map the system's architecture, dependencies, and design decisions into a single readable structure.

If this is right

- AOCI achieves higher task accuracy than all deployable baselines and approaches oracle performance on repository-scale code tasks.

- On 19 industrial tasks across five systems, AOCI yields zero defects while agent tools introduce defects in 12 cases.

- Token consumption drops by factors of 4 to 130 compared with mainstream agent-based tools.

- The accuracy and efficiency gains increase with task complexity.

- Only entries for changed code units need regeneration, keeping maintenance cost proportional to the scope of edits.

Where Pith is reading between the lines

- Teams could treat AOCI indices as living artifacts checked into version control to support repeated AI-assisted maintenance.

- The same symbolic-semantic pairing might reduce context costs when LLMs work with other large structured artifacts such as database schemas or configuration systems.

- Pre-built systematic representations could replace repeated query-time retrieval as the default pattern for LLM interaction with complex repositories.

Load-bearing premise

The encoding rules and entries can be generated and kept up to date so they faithfully capture architecture, dependencies, and key decisions without material loss or systematic bias across codebases and languages.

What would settle it

A large codebase and task suite in which an LLM guided by an AOCI index produces a final-state defect or consumes token counts comparable to agent tools, or in which the index misses a critical dependency that the oracle correctly uses.

Figures

read the original abstract

Large language models struggle with understanding codebases beyond a certain scale -- repositories with hundreds of thousands of lines of code. Existing methods -- retrieval, summarization, agent exploration -- each construct a different view at query time. The view varies between runs, and what persists is typically ad-hoc rather than systematic. This paper introduces AOCI (AI-Oriented Code Indexing): a symbolic-semantic repository representation -- a structured blueprint that an LLM can read in a single pass to gain a complete repository-level picture of the system's architecture, dependencies, and key design decisions before any task. An AOCI index consists of encoding rules followed by entries, with one entry per code unit (file or database table). Each entry pairs a symbolic tag with semantic content. The symbolic component provides architectural coordinates; the semantic component carries function, dependencies, and constraints. Together they form a consistent, stable representation of the entire system. Index maintenance is incremental: when code changes, only affected entries are regenerated under protocol rules. The AOCI Platform automates this process, keeping the blueprint aligned with the code. We evaluated AOCI on four projects across three LLMs and six context conditions (2,160 evaluations). AOCI outperforms all deployable baselines and ranks second only to the Oracle upper bound in overall accuracy. On 19 industrial tasks across five systems, AOCI produced zero final-state defects, while three mainstream agent-based tools introduced defects in 12 tasks and consumed 4--130$\times$ more tokens ($p < 0.001$). The advantage grows with task complexity.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces AOCI (AI-Oriented Code Indexing), a symbolic-semantic repository representation consisting of encoding rules and one entry per code unit (file or database table) pairing a symbolic tag with semantic content describing function, dependencies, and constraints. It claims this provides LLMs a complete, stable, single-pass view of large codebases, with incremental maintenance via the AOCI Platform. Empirical results include outperformance over baselines in 2,160 evaluations across four projects, three LLMs, and six context conditions, plus zero final-state defects on 19 industrial tasks across five systems (versus defects in 12 tasks for agent-based tools) with 4-130x token savings (p < 0.001).

Significance. If the index generation proves faithful, the work has substantial significance for practical LLM use in software engineering by replacing variable, high-cost retrieval or agent methods with a persistent, efficient blueprint. The scale of 2,160 evaluations with statistical testing and the focus on industrial tasks are notable strengths that could influence repository-scale code understanding if the completeness assumption holds.

major comments (1)

- [Abstract] Abstract: The central claim of zero final-state defects on 19 industrial tasks (with p < 0.001) is load-bearing on the assumption that automatically generated AOCI entries preserve all architecturally relevant information (dependencies, constraints, design decisions) without material loss or bias. The manuscript describes the encoding rules, one-entry-per-unit structure, and incremental regeneration but provides no independent verification, ablation study, or human validation of index completeness for the five systems.

minor comments (1)

- [Abstract] Abstract: The evaluation is described as occurring on 'four projects' for the 2,160 evaluations but 'five systems' for the 19 industrial tasks; clarify the relationship and any overlap or differences between these sets.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of the work's significance and for the constructive major comment. We address the point regarding validation of index completeness below.

read point-by-point responses

-

Referee: The central claim of zero final-state defects on 19 industrial tasks (with p < 0.001) is load-bearing on the assumption that automatically generated AOCI entries preserve all architecturally relevant information (dependencies, constraints, design decisions) without material loss or bias. The manuscript describes the encoding rules, one-entry-per-unit structure, and incremental regeneration but provides no independent verification, ablation study, or human validation of index completeness for the five systems.

Authors: We agree that the manuscript would be strengthened by explicit independent verification of AOCI entry completeness. The reported results provide indirect but substantial empirical support: across 19 industrial tasks on five systems, AOCI produced zero final-state defects while agent-based baselines introduced defects in 12 tasks, with 4-130x token reduction and p < 0.001. These tasks require accurate capture of dependencies, constraints, and design decisions at repository scale. Nevertheless, to directly address the concern we will add (1) an ablation study measuring task performance when specific semantic fields (dependencies, constraints) are omitted from entries and (2) a human validation subsection in which domain experts review a stratified sample of AOCI entries from the five systems for completeness and fidelity. Both will appear in the revised manuscript. revision: yes

Circularity Check

No circularity: empirical claims rest on direct evaluation

full rationale

The paper presents AOCI as a new symbolic-semantic indexing approach and validates it via empirical benchmarks (2,160 evaluations across projects and LLMs) plus 19 industrial tasks showing zero defects versus baselines. No equations, fitted parameters, self-citations, or uniqueness theorems appear in the derivation chain. The core result (defect-free performance) is reported as an experimental outcome rather than a quantity forced by the method's own definition or by prior self-referential work. The encoding rules and index generation are described as inputs to the evaluation, not as tautological with the measured accuracy or token savings.

Axiom & Free-Parameter Ledger

invented entities (1)

-

AOCI index

no independent evidence

Lean theorems connected to this paper

-

Foundation/AlphaCoordinateFixation.lean (φ-ladder / J-cost)alpha_pin_under_high_calibration unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

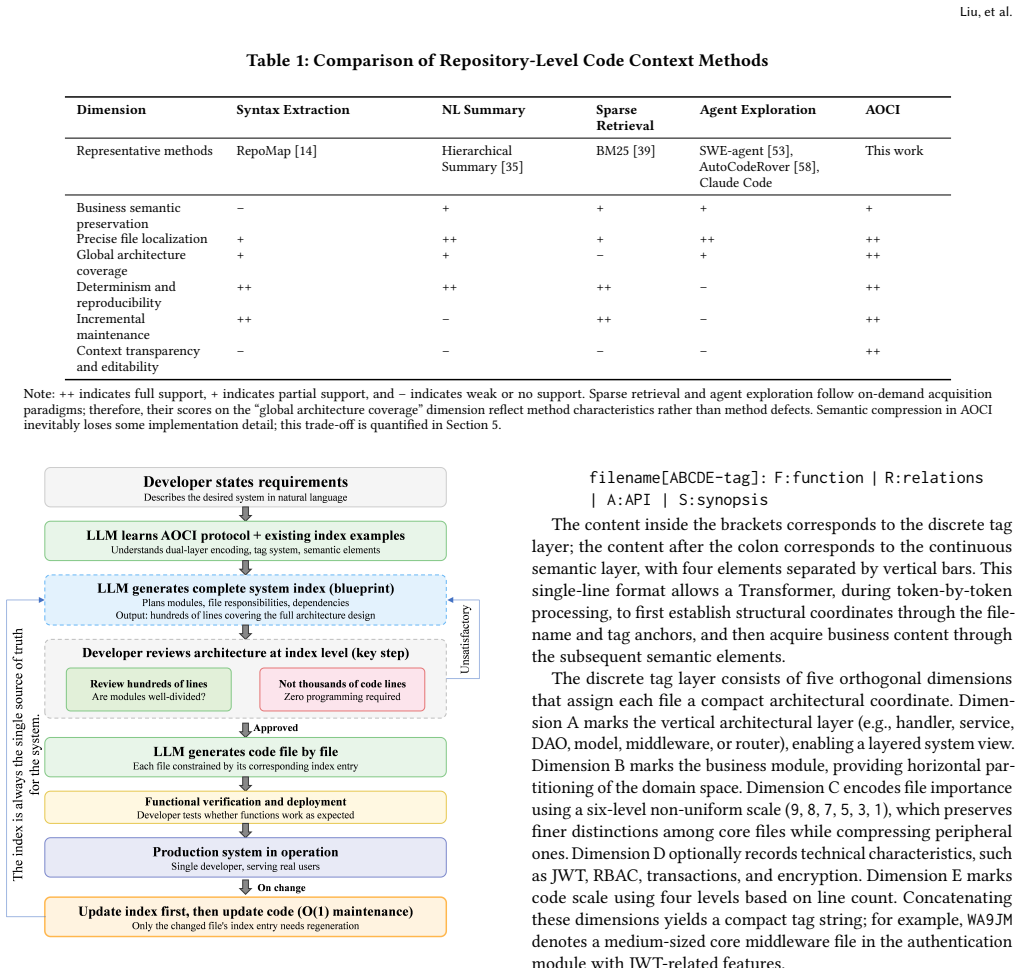

Dimension C encodes file importance using a six-level non-uniform scale (9, 8, 7, 5, 3, 1)

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Agrawal, Aditya Kanade, Navin Goyal, Shuvendu K

Lakshya A. Agrawal, Aditya Kanade, Navin Goyal, Shuvendu K. Lahiri, and Sriram K. Rajamani. 2023. Guiding Language Models of Code with Global Context using Monitors. InAdvances in Neural Information Processing Systems

2023

-

[2]

Wasi Uddin Ahmad, Saikat Chakraborty, Baishakhi Ray, and Kai-Wei Chang. 2020. A Transformer-based Approach for Source Code Summarization. InProceedings of the 58th Annual Meeting of the Association for Computational Linguistics. 4998– 5007

2020

-

[3]

Baolong Bi, Shenghua Liu, Yiwei Wang, Lingrui Mei, and Xueqi Cheng. 2024. Iterative Retrieval-Augmented Generation. InFindings of the Association for Computational Linguistics: ACL 2024

2024

- [4]

-

[5]

Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde De Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. 2021. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374(2021)

work page internal anchor Pith review arXiv 2021

-

[6]

Zhaoling Chen, Robert Tang, Gangda Deng, Fang Wu, Jialong Wu, Zhiwei Jiang, Viktor Prasanna, Arman Cohan, and Xingyao Wang. 2025. LocAgent: Graph- Guided LLM Agents for Code Localization. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 8697–8727

2025

-

[7]

Wei Cheng, Yuhan Wu, and Wei Hu. 2024. Dataflow-Guided Retrieval Augmen- tation for Repository-Level Code Completion. InProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 7957–7977

2024

-

[8]

Cheng-Han Chiang and Hung-yi Lee. 2023. Can Large Language Models Be an Alternative to Human Evaluations?. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 15607– 15631

2023

-

[9]

Ken Deng, Jiaheng Zhu, Jingxuan Ren, Zhenhao Liu, Shun Zheng, Jinliang Zhao, Jiaqi Liu, Bangyu Yang, Wenbo Chai, Bo Yu, Feng Tian, and Bo Zheng. 2024. R2C2-Coder: Enhancing and Benchmarking Real-world Repository-level Code Completion Abilities of Code Large Language Models. InFindings of the Associa- tion for Computational Linguistics: ACL 2024

2024

-

[10]

Yangruibo Ding, Zijian Wang, Wasi Ahmad, Hantian Ding, Ming Tan, Nihal Jain, Murali Krishna Ramanathan, Ramesh Nallapati, Parminder Bhatia, Dan Roth, and Bing Xiang. 2023. CrossCodeEval: A Diverse and Multilingual Benchmark for Cross-File Code Completion. InAdvances in Neural Information Processing Systems

2023

- [11]

-

[12]

Angela Fan, Beliz Gokkaya, Mark Harman, Mitya Lyubarskii, Shubho Sengupta, Shin Yoo, and Jie M. Zhang. 2023. Large Language Models for Software Engi- neering: Survey and Open Problems. In2023 IEEE/ACM International Conference on Software Engineering: Future of Software Engineering (ICSE-FoSE). 31–53

2023

-

[13]

Zhangyin Feng, Daya Guo, Duyu Tang, Nan Duan, Xiaocheng Feng, Ming Gong, Linjun Shou, Bing Qin, Ting Liu, Daxin Jiang, and Ming Zhou. 2020. CodeBERT: A Pre-Trained Model for Programming and Natural Languages. InFindings of the Association for Computational Linguistics: EMNLP 2020. 1536–1547

2020

-

[14]

2023.Building a Better Repository Map with Tree-sitter

Paul Gauthier. 2023.Building a Better Repository Map with Tree-sitter. Retrieved April 11, 2026 from https://aider.chat/2023/10/22/repomap.html

2023

-

[15]

2024.Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context

Gemini Team, Google. 2024.Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. Technical Report. Google DeepMind

2024

-

[16]

Jiawei Gu, Xuhui Jiang, Zhichao Shi, Hexiang Tan, Xuehao Zhai, Chengjin Xu, Wei Li, Yinghan Shen, Shengjie Ma, Honghao Liu, Saizhuo Wang, Kun Zhang, Yuanzhuo Wang, Wen Gao, Lionel Ni, and Jian Guo. 2025. A Survey on LLM- as-a-Judge. InFindings of the Association for Computational Linguistics: ACL 2025

2025

-

[17]

Junda He, Christoph Treude, and David Lo. 2025. LLM-Based Multi-Agent Systems for Software Engineering: Literature Review, Vision, and the Road Ahead.ACM Transactions on Software Engineering and Methodology(2025)

2025

-

[18]

2025.Context Rot: How Increasing Input Tokens Impacts LLM Performance

Kelly Hong, Anton Troynikov, and Jeff Huber. 2025.Context Rot: How Increasing Input Tokens Impacts LLM Performance. Technical Report. Chroma

2025

-

[19]

Xinyi Hou, Yanjie Zhao, Yue Liu, Zhou Yang, Kailong Wang, Li Li, Xiapu Luo, David Lo, John Grundy, and Haoyu Wang. 2024. Large Language Models for Software Engineering: A Systematic Literature Review.ACM Transactions on Software Engineering and Methodology33, 8 (2024), 1–79

2024

-

[20]

Ziyan Jiang, Xueguang Ma, and Wenhu Chen. 2025. LongRAG: Enhancing Retrieval-Augmented Generation with Long-context LLMs. InInternational Con- ference on Learning Representations

2025

-

[21]

Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan

Carlos E. Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. 2024. SWE-bench: Can Language Models Resolve Real-World GitHub Issues?. InThe Twelfth International Conference on Learning Representations (ICLR)

2024

-

[22]

Yuri Kuratov, Aydar Bulatov, Petr Anokhin, Ivan Rodkin, Dmitry Sorokin, Artyom Sorokin, and Mikhail Burtsev. 2024. BABILong: Testing the Limits of LLMs with Long Context Reasoning-in-a-Haystack. InAdvances in Neural Information Processing Systems

2024

-

[23]

Alexander LeClair, Sakib Haque, Lingfei Wu, and Collin McMillan. 2020. Im- proved Code Summarization via a Graph Neural Network. InProceedings of the 28th International Conference on Program Comprehension. 184–195

2020

-

[24]

Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rock- täschel, et al. 2020. Retrieval-augmented generation for knowledge-intensive nlp tasks.Advances in neural information processing systems33 (2020), 9459–9474

2020

-

[25]

Jia Li, Ge Li, Yunfei Zhao, Yongmin Li, Huanyu Liu, Hao Zhu, Lecheng Wang, Kaibo Liu, Zheng Fang, Lanshen Wang, Jiazheng Ding, Xuanming Zhang, Yuqi Zhu, Yihong Dong, Zhi Jin, Binhua Li, Fei Huang, Yongbin Li, Bin Gu, and Mengfei Yang. 2024. DevEval: A Manually-Annotated Code Generation Benchmark Aligned with Real-World Code Repositories. InFindings of the...

2024

-

[26]

Fang Liu, Yang Liu, Lin Shi, Houkun Huang, Ruifeng Wang, Zhen Yang, Li Zhang, Zhongqi Li, and Yuchi Ma. 2025. Exploring and Evaluating Hallucinations in LLM- Powered Code Generation. InProceedings of the 34th ACM SIGSOFT International Symposium on Software Testing and Analysis

2025

-

[27]

Hongyuan Liu, Shizhan Wang, Zilong Tan, Yuxuan Xiao, Maolin Ye, Sen Wang, Siyuan Wang, Zhiqi Qin, Hongyu Tan, Fangyuan Wang, and Peng Di. 2025. Code Graph Model (CGM): A Graph-Integrated Large Language Model for Repository- Level Software Engineering Tasks. InInternational Conference on Learning Rep- resentations

2025

-

[28]

Liu, Kevin Lin, John Hewitt, Ashwin Paranjape, Michele Bevilacqua, Fabio Petroni, and Percy Liang

Nelson F. Liu, Kevin Lin, John Hewitt, Ashwin Paranjape, Michele Bevilacqua, Fabio Petroni, and Percy Liang. 2024. Lost in the Middle: How Language Models Use Long Contexts.Transactions of the Association for Computational Linguistics 12 (2024), 157–173

2024

-

[29]

Tianyang Liu, Canwen Xu, and Julian McAuley. 2024. RepoBench: Benchmarking Repository-Level Code Auto-Completion Systems. InInternational Conference on Learning Representations

2024

-

[30]

Xiangyan Liu, Bo Lan, Zhiyuan Hu, Yang Liu, Zhicheng Zhang, Wenmeng Zhou, Fei Wang, and Michael Shieh. 2025. CodeXGraph: Bridging Large Language Models and Code Repositories via Code Graph Databases. InProceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics (Volume 1: Long Papers)

2025

-

[31]

Qinyu Luo, Yining Ye, Shihao Liang, Zhong Zhang, Yujia Qin, Yaxi Lu, Yesai Wu, Xin Cong, Yankai Lin, Yingli Zhang, Xiaoyin Che, Zhiyuan Liu, and Maosong Sun

-

[32]

InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics

RepoAgent: An LLM-Powered Open-Source Framework for Repository- Level Code Documentation Generation. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics

-

[33]

Yingwei Ma, Qingping Yang, Rongyu Cao, Binhua Li, Fei Huang, and Yongbin Li. 2025. Alibaba lingmaagent: Improving automated issue resolution via com- prehensive repository exploration. InProceedings of the 33rd ACM International Conference on the Foundations of Software Engineering. 238–249

2025

-

[34]

Manning, Prabhakar Raghavan, and Hinrich Schütze

Christopher D. Manning, Prabhakar Raghavan, and Hinrich Schütze. 2008.Intro- duction to Information Retrieval. Cambridge University Press

2008

-

[35]

Daye Nam, Andrew Macvean, Vincent Hellendoorn, Bogdan Vasilescu, and Brad Myers. 2024. Using an LLM to Help with Code Understanding. InProceedings of the 46th IEEE/ACM International Conference on Software Engineering. 1–13

2024

-

[36]

Amirkia Rafiei Oskooei, Selcan Yukcu, Mehmet Cevheri Bozoglan, and Mehmet S Aktas. 2025. Repository-level code understanding by llms via hierarchical summa- rization: Improving code search and bug localization. InInternational Conference on Computational Science and Its Applications. Springer, 88–105

2025

-

[37]

Siru Ouyang, Wenhao Yu, Kaixin Ma, Zilin Xiao, Zhihan Zhang, Mengzhao Jia, Jiawei Han, Hongming Zhang, and Dong Yu. 2025. RepoGraph: Enhancing AI Software Engineering with Repository-level Code Graph. InInternational Conference on Learning Representations. 30361–30384

2025

-

[38]

Bowman, and Shi Feng

Arjun Panickssery, Samuel R. Bowman, and Shi Feng. 2024. LLM Evaluators Recognize and Favor Their Own Generations. InAdvances in Neural Information Processing Systems

2024

-

[39]

Weihan Peng, Yuling Shi, Yuhang Wang, Xinyun Zhang, Beijun Shen, and Xi- aodong Gu. 2025. Swe-qa: Can language models answer repository-level code questions?arXiv preprint arXiv:2509.14635(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[40]

2009.The probabilistic relevance frame- work: BM25 and beyond

Stephen Robertson and Hugo Zaragoza. 2009.The probabilistic relevance frame- work: BM25 and beyond. Vol. 4. Now Publishers Inc

2009

-

[41]

Oscar Sainz, Jon Ander Campos, Iker García-Ferrero, Julen Etxaniz, Oier Lopez de Lacalle, and Eneko Agirre. 2023. NLP Evaluation in Trouble: On the Need to Measure LLM Data Contamination for each Benchmark. InFindings of the Association for Computational Linguistics: EMNLP 2023. 10776–10787

2023

-

[42]

Chi, Nathanael Schärli, and Denny Zhou

Freda Shi, Xinyun Chen, Kanishka Misra, Nathan Scales, David Dohan, Ed H. Chi, Nathanael Schärli, and Denny Zhou. 2023. Large Language Models Can Be Easily Distracted by Irrelevant Context. InProceedings of the 40th International Conference on Machine Learning. 31210–31227. Liu, et al

2023

-

[43]

Disha Shrivastava, Hugo Larochelle, and Daniel Tarlow. 2023. Repository-Level Prompt Generation for Large Language Models of Code. InProceedings of the 40th International Conference on Machine Learning. 31693–31715

2023

-

[44]

Weisong Sun, Yun Miao, Yuekang Li, Hongyu Zhang, Chunrong Fang, Yi Liu, Gelei Deng, Yang Liu, and Zhenyu Chen. 2025. Source Code Summarization in the Era of Large Language Models. InProceedings of the 47th IEEE/ACM International Conference on Software Engineering

2025

-

[45]

Wei Tao, Yucheng Zhou, Yanlin Wang, Wenqiang Zhang, Hongyu Zhang, and Yu Cheng. 2024. MAGIS: LLM-Based Multi-Agent Framework for GitHub Issue Resolution. InAdvances in Neural Information Processing Systems, Vol. 37

2024

-

[46]

Yuchen Tian, Weixiang Yan, Qian Yang, Xuandong Zhao, Qian Chen, Wen Wang, Ziyang Luo, Lei Ma, and Dawn Song. 2025. CodeHalu: Investigating Code Hallucinations in LLMs via Execution-based Verification. InProceedings of the AAAI Conference on Artificial Intelligence

2025

-

[47]

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need.Advances in neural information processing systems30 (2017)

2017

-

[48]

Xu, Xiangru Tang, Mingchen Zhuge, Jiayi Pan, Yueqi Song, Bowen Li, Jaskirat Singh, Hoang H

Xingyao Wang, Boxuan Li, Yufan Song, Frank F. Xu, Xiangru Tang, Mingchen Zhuge, Jiayi Pan, Yueqi Song, Bowen Li, Jaskirat Singh, Hoang H. Tran, Fuqiang Li, Ren Ma, Mingzhang Zheng, Bill Qian, Yanjun Shao, Niklas Muennighoff, Yizhe Zhang, Binyuan Hui, Junyang Lin, Robert Brennan, Hao Peng, Heng Ji, and Gra- ham Neubig. 2025. OpenHands: An Open Platform for...

2025

-

[49]

Yue Wang, Weishi Wang, Shafiq Joty, and Steven C.H. Hoi. 2021. CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Un- derstanding and Generation. InProceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. 8696–8708

2021

-

[50]

Yanlin Wang, Yanli Wang, Daya Guo, Jiachi Chen, Ruikai Zhang, Yuchi Ma, and Zibin Zheng. 2025. RLCoder: Reinforcement Learning for Repository-Level Code Completion. InProceedings of the 47th IEEE/ACM International Conference on Software Engineering

2025

-

[51]

Di Wu, Wasi Uddin Ahmad, Dejiao Zhang, Murali Krishna Ramanathan, and Xiaofei Ma. 2024. Repoformer: Selective Retrieval for Repository-Level Code Completion. InProceedings of the 41st International Conference on Machine Learn- ing. 53848–53871

2024

-

[52]

Chunqiu Steven Xia, Yinlin Deng, Soren Dunn, and Lingming Zhang. 2025. Agent- less: Demystifying LLM-based Software Engineering Agents. InProceedings of the 33rd ACM International Conference on the Foundations of Software Engineering (FSE)

2025

-

[53]

Guangxuan Xiao, Yuandong Tian, Beidi Chen, Song Han, and Mike Lewis. 2024. Efficient Streaming Language Models with Attention Sinks. InThe Twelfth Inter- national Conference on Learning Representations

2024

-

[54]

John Yang, Carlos E Jimenez, Alexander Wettig, Kilian Lieret, Shunyu Yao, Karthik Narasimhan, and Ofir Press. 2024. Swe-agent: Agent-computer interfaces enable automated software engineering.Advances in Neural Information Processing Systems37 (2024), 50528–50652

2024

-

[55]

Hao Yu, Bo Shen, Dezhi Ran, Jiaxin Zhang, Qi Zhang, Yuchi Ma, Guangtai Liang, Ying Li, Qianxiang Wang, and Tao Xie. 2024. CoderEval: A Benchmark of Pragmatic Code Generation with Generative Pre-trained Models. InProceedings of the 46th IEEE/ACM International Conference on Software Engineering. 1–12

2024

-

[56]

Fengji Zhang, Bei Chen, Yue Zhang, Jacky Keung, Jin Liu, Daoguang Zan, Yi Mao, Jian-Guang Lou, and Weizhu Chen. 2023. RepoCoder: Repository-Level Code Completion Through Iterative Retrieval and Generation. InProceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. 2471–2484

2023

-

[57]

Kechi Zhang, Jia Li, Ge Li, Xianjie Shi, and Zhi Jin. 2024. CodeAgent: Enhancing Code Generation with Tool-Integrated Agent Systems for Real-World Repo-level Coding Challenges. InProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 13643–13658

2024

-

[58]

Linghao Zhang, Shilin Xiang, Peng Xing, Boyuan Zhang, Jialin Cao, Lingxiao Wu, Xintong Ding, Jianfei Wang, Bing Liu, Jiang Lei, Rui Liu, and Yun Wu. 2025. SWE-bench-Live: A Live Benchmark for Issue Resolving in Real-World Software Engineering. InAdvances in Neural Information Processing Systems

2025

-

[59]

Yuntong Zhang, Haifeng Ruan, Zhiyu Fan, and Abhik Roychoudhury. 2024. Autocoderover: Autonomous program improvement. InProceedings of the 33rd ACM SIGSOFT International Symposium on Software Testing and Analysis. 1592– 1604

2024

-

[60]

Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric Xing, et al. 2023. Judging llm-as-a-judge with mt-bench and chatbot arena.Advances in neural information processing systems36 (2023), 46595–46623

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.