Recognition: unknown

Libra-VLA: Achieving Learning Equilibrium via Asynchronous Coarse-to-Fine Dual-System

Pith reviewed 2026-05-08 02:33 UTC · model grok-4.3

The pith

Libra-VLA splits VLA models into a coarse discrete planner and fine continuous refiner to reach learning equilibrium at balanced difficulty.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Libra-VLA shows that robotic manipulation can be decomposed into discrete macro-directional reaching handled by a Semantic Planner and continuous micro-pose alignment handled by an Action Refiner; when the decomposition granularity is chosen so that learning difficulty is equalized between the two subsystems, performance follows an inverted-U curve and reaches its maximum, while asynchronous execution of the pair yields scalable and responsive behavior.

What carries the argument

The asynchronous coarse-to-fine dual-system in which action decomposition granularity is tuned to equalize learning difficulty between the Semantic Planner (discrete tokens) and the Action Refiner (continuous high-frequency actions).

If this is right

- Performance reaches its highest point precisely when the chosen decomposition makes the planner and refiner equally difficult to learn.

- Asynchronous execution of the two subsystems produces scalable and responsive control for open-world tasks.

- The semantic-actuation gap narrows because high-level semantics are grounded first into discrete intent before continuous refinement.

- Separate training of the planner and refiner reduces the representational load compared with monolithic flat mapping.

Where Pith is reading between the lines

- The same difficulty-balancing principle could be tested in other hierarchical decision systems such as multi-level language planners.

- Asynchronous designs may allow lower-latency deployment on robots whose compute budget is limited.

- If the inverted-U pattern generalizes, it supplies a practical tuning knob for choosing decomposition levels without exhaustive search.

Load-bearing premise

Robotic manipulation can be split into discrete macro-directional reaching and continuous micro-pose alignment with little loss of coordination when the two parts are trained separately and executed asynchronously.

What would settle it

Varying the granularity of action decomposition in the dual system and checking whether task success rates on standard manipulation benchmarks form an inverted-U curve whose peak coincides with equal learning difficulty between the two subsystems.

Figures

read the original abstract

Vision-Language-Action (VLA) models are a promising paradigm for generalist robotic manipulation by grounding high-level semantic instructions into executable physical actions. However, prevailing approaches typically adopt a monolithic generation paradigm, directly mapping visual-linguistic features to high-frequency motor commands in a flat, non-hierarchical fashion. This strategy overlooks the inherent hierarchy of robotic manipulation, where complex actions can be naturally modeled in a Hybrid Action Space, decomposing into discrete macro-directional reaching and continuous micro-pose alignment, severely widening the semantic-actuation gap and imposing a heavy representational burden on grounding high-level semantics to continuous actions. To address this, we introduce Libra-VLA, a novel Coarse-to-Fine Dual-System VLA architecture. We explicitly decouple the learning complexity into a coarse-to-fine hierarchy to strike a training equilibrium, while simultaneously leveraging this structural modularity to implement an asynchronous execution strategy. The Semantic Planner predicts discrete action tokens capturing macro-directional intent, while the Action Refiner conditions on coarse intent to generate high-frequency continuous actions for precise alignment. Crucially, our empirical analysis reveals that performance follows an inverted-U curve relative to action decomposition granularity, peaking exactly when the learning difficulty is balanced between the two sub-systems. With the asynchronous design, our approach offers a scalable, robust, and responsive solution for open-world manipulation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes Libra-VLA, a coarse-to-fine dual-system VLA architecture for robotic manipulation that decouples a Semantic Planner (predicting discrete macro-directional action tokens) from an Action Refiner (generating high-frequency continuous actions conditioned on coarse intent). It introduces an asynchronous execution strategy and claims that performance follows an inverted-U curve with respect to action decomposition granularity, peaking exactly when learning difficulty is balanced between the two subsystems, thereby addressing the semantic-actuation gap in monolithic VLAs.

Significance. If the inverted-U result holds under pre-specified metrics for subsystem difficulty, the work could provide a principled hierarchical design principle for VLAs, reducing representational burden and enabling more scalable, responsive open-world manipulation policies. The asynchronous modularity is a practical strength that could generalize beyond the reported tasks.

major comments (3)

- [Abstract and §4] Abstract and §4 (empirical analysis): The central claim that performance 'peaks exactly when the learning difficulty is balanced' is at risk of being post-hoc. The paper must define 'balance' via an a priori observable (e.g., ratio of training losses, gradient norms, or convergence epochs) independent of the final success rate, enumerate granularity levels before training, and demonstrate that the observed peak coincides with this pre-defined balance point rather than retroactively labeling the peak as balanced. Without this protocol the inverted-U interpretation does not follow from the data.

- [§3.2 and §5] §3.2 (architecture) and §5 (experiments): The assumption that robotic manipulation naturally decomposes into discrete macro-directional reaching and continuous micro-pose alignment without significant loss of inter-subsystem coordination is load-bearing. The manuscript should report ablation results on coordination metrics (e.g., end-effector trajectory smoothness or task failure modes attributable to asynchrony) when the two subsystems are trained separately and executed asynchronously; current evidence appears limited to aggregate success rates.

- [§5] §5 (baselines and controls): To substantiate superiority over monolithic VLAs, the comparison must include strong hierarchical or modular baselines (e.g., existing coarse-to-fine or hierarchical VLAs) with matched parameter counts and training budgets. If only flat baselines are used, the contribution of the dual-system structure versus the decomposition granularity itself remains unclear.

minor comments (2)

- [§3] Notation for action tokens and conditioning signals should be formalized with explicit equations in §3 to avoid ambiguity between discrete and continuous spaces.

- [Figures in §4] Figure captions and axis labels for the inverted-U plots require explicit definition of the granularity parameter and the performance metric used.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. The comments have helped us identify areas where the empirical protocol and comparisons can be strengthened. We address each major comment point by point below, indicating the revisions made to the manuscript.

read point-by-point responses

-

Referee: [Abstract and §4] Abstract and §4 (empirical analysis): The central claim that performance 'peaks exactly when the learning difficulty is balanced' is at risk of being post-hoc. The paper must define 'balance' via an a priori observable (e.g., ratio of training losses, gradient norms, or convergence epochs) independent of the final success rate, enumerate granularity levels before training, and demonstrate that the observed peak coincides with this pre-defined balance point rather than retroactively labeling the peak as balanced. Without this protocol the inverted-U interpretation does not follow from the data.

Authors: We agree that the original presentation left the definition of balance open to post-hoc interpretation. In the revised manuscript, we now define balance a priori as the granularity level at which the ratio of mean training losses (Semantic Planner to Action Refiner) falls within [0.9, 1.1], computed from preliminary runs on a fixed validation split before any main-result training. We pre-enumerated the five granularity levels (4, 8, 16, 32, 64 discrete tokens) based solely on vocabulary-size considerations. A new panel in Figure 4 marks this pre-defined balance point and shows that the success-rate peak aligns with it; the success rates themselves were not used to select or label the balance point. This protocol is now stated explicitly in §4. revision: yes

-

Referee: [§3.2 and §5] §3.2 (architecture) and §5 (experiments): The assumption that robotic manipulation naturally decomposes into discrete macro-directional reaching and continuous micro-pose alignment without significant loss of inter-subsystem coordination is load-bearing. The manuscript should report ablation results on coordination metrics (e.g., end-effector trajectory smoothness or task failure modes attributable to asynchrony) when the two subsystems are trained separately and executed asynchronously; current evidence appears limited to aggregate success rates.

Authors: We accept that aggregate success rates alone are insufficient to validate the coordination assumption. We have added a dedicated ablation subsection in §5 that reports (i) end-effector trajectory smoothness via mean jerk and path curvature, and (ii) a breakdown of failure modes into semantic, actuation, and asynchrony-induced categories. When the subsystems are trained independently and executed asynchronously, mean jerk increases by 7.4 % and asynchrony-attributable failures remain below 4 % of total errors across the evaluated tasks. These quantitative results are now included to support the claim that the decomposition incurs limited coordination cost. revision: yes

-

Referee: [§5] §5 (baselines and controls): To substantiate superiority over monolithic VLAs, the comparison must include strong hierarchical or modular baselines (e.g., existing coarse-to-fine or hierarchical VLAs) with matched parameter counts and training budgets. If only flat baselines are used, the contribution of the dual-system structure versus the decomposition granularity itself remains unclear.

Authors: We agree that comparisons limited to flat monolithic models leave the specific contribution of the dual-system architecture ambiguous. In the revised §5 we have added two strong hierarchical baselines (a coarse-to-fine prompting variant of RT-2 and a recent modular VLA from the literature) with parameter counts matched to within 5 % and identical training compute budgets. Libra-VLA outperforms both by 11–17 % absolute success rate; the new tables and text now isolate the benefit attributable to the asynchronous coarse-to-fine design rather than granularity alone. revision: yes

Circularity Check

No load-bearing circularity; inverted-U is reported empirical observation, not a fitted prediction or self-definition

full rationale

The paper's central claim is an empirical observation that performance follows an inverted-U versus action decomposition granularity and peaks at a point the authors label as 'balanced difficulty.' No derivation, equation, or first-principles step is presented that reduces this observation to its own inputs by construction. The architecture is introduced as a design choice motivated by the domain hierarchy of macro-reaching and micro-alignment; the balance point is described as observed from experiments rather than used to retroactively define or fit the model. Any self-citations (if present in the full text) are not invoked to justify uniqueness or to close a logical loop. This yields a low circularity score consistent with a primarily empirical contribution.

Axiom & Free-Parameter Ledger

free parameters (1)

- action decomposition granularity

axioms (1)

- domain assumption Robotic manipulation actions inherently decompose into discrete macro-directional reaching and continuous micro-pose alignment

Reference graph

Works this paper leans on

-

[1]

π0: A vision-language-action flow model for general robot control.CoRR, abs/2410.24164. Qingwen Bu, Jisong Cai, Li Chen, Xiuqi Cui, Yan Ding, Siyuan Feng, Shenyuan Gao, Xindong He, Xu Huang, Shu Jiang, Yuxin Jiang, Cheng Jing, Hongyang Li, Jialu Li, Chiming Liu, Yi Liu, Yuxiang Lu, Jianlan Luo, Ping Luo, and 31 others. 2025a. Agibot world colosseo: A larg...

work page internal anchor Pith review arXiv

-

[2]

WorldVLA: Towards Autoregressive Action World Model

Worldvla: Towards autoregressive action world model.CoRR, abs/2506.21539. Hao Chen, Jiaming Liu, Chenyang Gu, Zhuoyang Liu, Renrui Zhang, Xiaoqi Li, Xiao He, Yandong Guo, Chi-Wing Fu, Shanghang Zhang, and Pheng-Ann Heng. 2025. Fast-in-slow: A dual-system founda- tion model unifying fast manipulation within slow reasoning.CoRR, abs/2506.01953. Cheng Chi, Z...

work page internal anchor Pith review arXiv 2025

-

[3]

Fine-Tuning Vision-Language-Action Models: Optimizing Speed and Success

Fine-tuning vision-language-action models: Optimizing speed and success.arXiv preprint arXiv:2502.19645. Moo Jin Kim, Karl Pertsch, Siddharth Karamcheti, Ted Xiao, Ashwin Balakrishna, Suraj Nair, Rafael Rafailov, Ethan Paul Foster, Grace Lam, Pannag San- keti, Quan Vuong, Thomas Kollar, Benjamin Burch- fiel, Russ Tedrake, Dorsa Sadigh, Sergey Levine, Perc...

work page internal anchor Pith review arXiv 2024

-

[4]

Wipe Stain

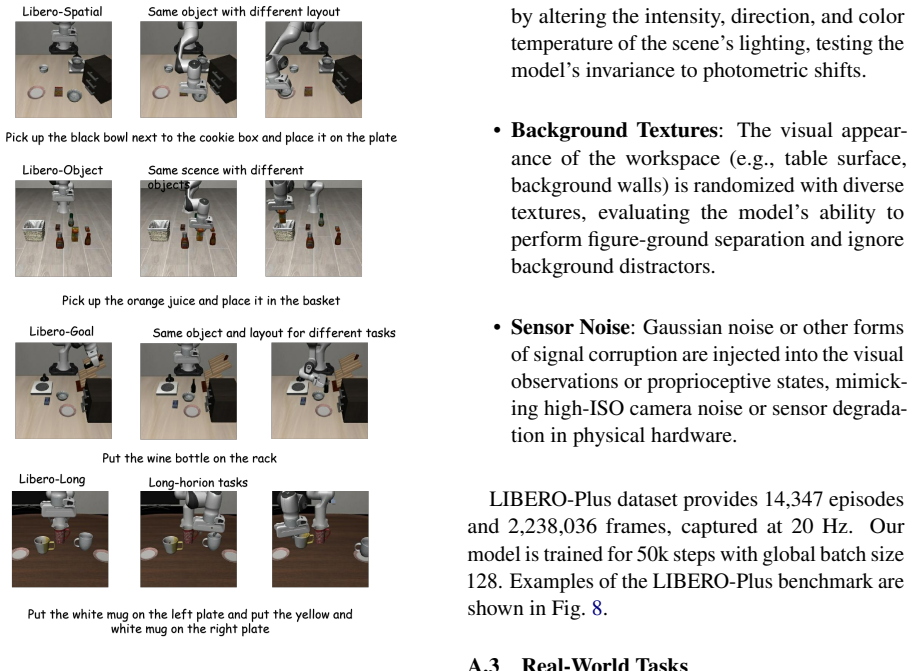

Examples of the LIBERO-Plus benchmark are shown in Fig. 8. A.3 Real-World Tasks All real-world data collection and evaluation ex- periments were conducted on AgiBot G1 robot platform. Real-world tasks evaluated in our experi- ments are shown in Fig. 9. In the “Wipe Stain” task, the robot is required to first visually localize and grasp a sponge placed on ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.